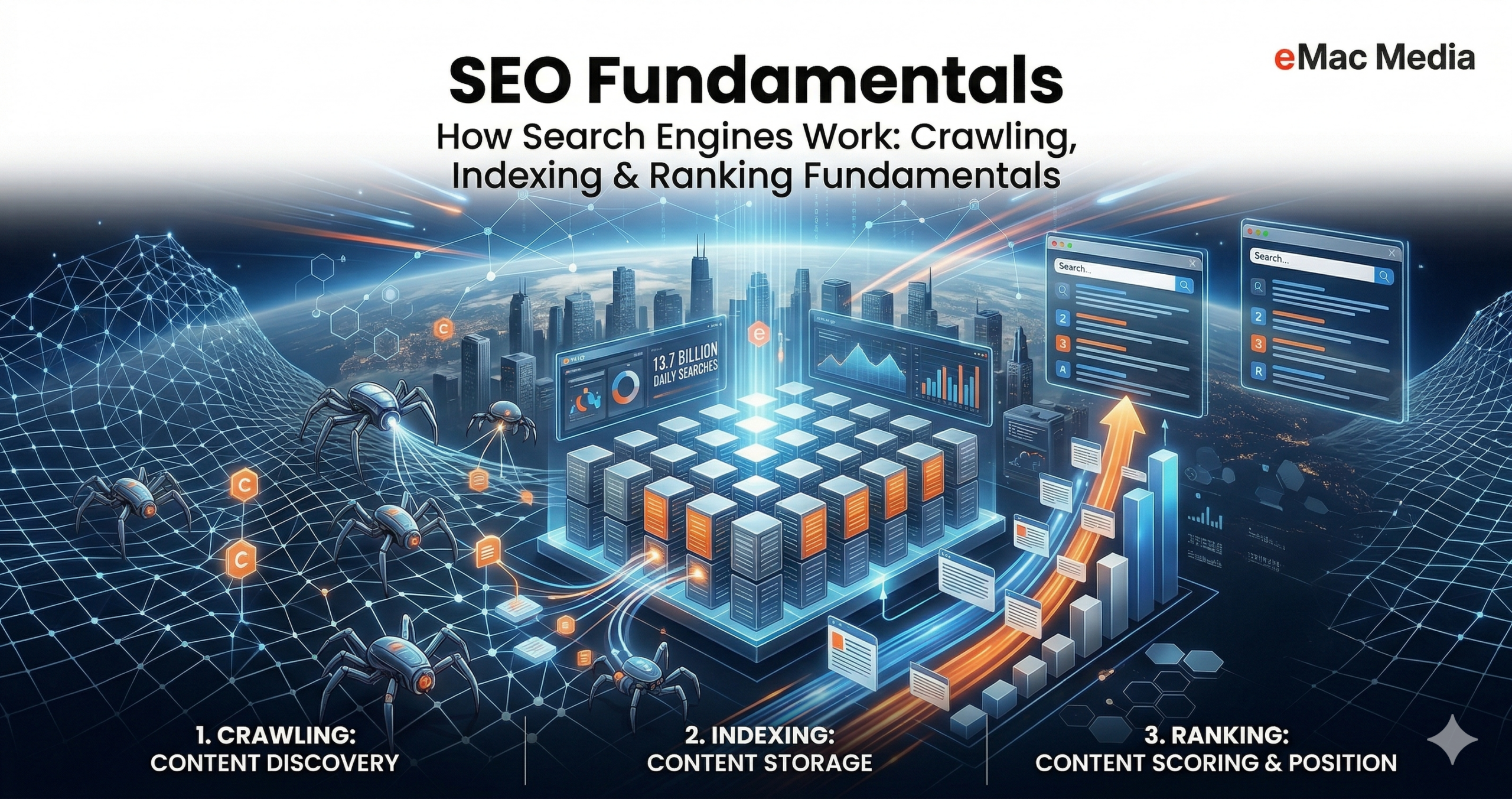

Search engines are the gateway to the internet's vast information landscape. Google alone processes over 13.7 billion searches per day — roughly 5 trillion annually — while maintaining an index exceeding 100 million gigabytes. Behind every query lies a sophisticated three-stage pipeline: crawling (discovering content), indexing (organizing and storing it), and ranking (serving the most relevant results). This article examines the technical architecture, algorithms, and design principles that power this pipeline — from the mechanics of web crawlers to the AI-driven ranking systems that determine what users see.

The Three-Stage Pipeline

All major search engines follow the same core workflow, regardless of their interface differences. When a user enters a query, the engine does not search the live web in real time. Instead, it queries a pre-built index — a massive, structured database compiled through continuous crawling and processing. The three stages are sequential and interdependent: if crawling is blocked, nothing gets indexed; if indexing fails, ranking is impossible. Google's official documentation describes this pipeline in detail.

Not all pages make it through each stage. Pages may be blocked from crawling, excluded during indexing due to low quality or duplication, or simply never surfaced in results because they lack relevance for any query.

Stage 1: Crawling — Discovering the Web

How Crawlers Work

Crawling is the discovery process in which search engines deploy automated programs — commonly called crawlers, spiders, or bots — to traverse the web and find content. Google's primary crawler, Googlebot, begins with pages it already knows about (from previous crawls or submitted sitemaps), then follows every internal and external link it encounters to discover new URLs. This link-following behavior is fundamental: the web's hyperlink structure is the primary mechanism through which crawlers navigate from page to page.

Googlebot operates as two distinct crawler types — a smartphone user agent and a desktop user agent — with the smartphone version carrying primary weight in most indexing decisions, reflecting Google's mobile-first indexing approach. Since Google's "evergreen" update in 2019, both versions stay current with the latest Chromium releases, enabling them to handle complex JavaScript-heavy sites.

URL Discovery Methods

Crawlers discover new URLs through multiple pathways:

- Link following: Extracting hyperlinks from already-crawled pages and adding them to the crawl queue.

- XML sitemaps: Website owners submit structured files listing important URLs, which crawlers use to find pages that may not be well-linked. Proper sitemap configuration is a core component of technical SEO.

- External references: Links from other websites pointing to new content.

- API pings and signals: Notifications from content delivery networks, sitemaps, and other sources indicating content changes.

Robots.txt and Crawl Directives

Before crawling a site, a crawler's first stop is the robots.txt file, located at the site's root directory. This file acts as a set of instructions, specifying which parts of the site crawlers can or cannot access. It uses directives such as User-agent (specifying which crawler the rule applies to), Disallow (blocking specific paths), Allow (permitting access), and Sitemap (pointing to the site's XML sitemap).

Pages can also use HTML meta tags like noindex (exclude from the index) and nofollow (do not follow outbound links) to control crawler behavior at the page level. However, these meta directives only affect indexing — they must first be crawlable to be read and respected.

Robots.txt controls access, while meta robots tags control indexing. A page must be crawlable for its meta directives to be discovered and obeyed. Blocking a page in robots.txt while using noindex creates a conflict — the noindex tag will never be seen.

Crawl Budget

Search engines cannot crawl the entire web continuously, so they allocate resources strategically through a concept known as crawl budget. Google defines crawl budget as the intersection of two factors:

| Factor | Description |

|---|---|

| Crawl Capacity Limit | The maximum number of simultaneous connections Googlebot uses, determined by server health, response times, and error rates. |

| Crawl Demand | How much Google wants to crawl a site, influenced by popularity, content freshness, site events, and perceived inventory. |

The effective crawl budget is the minimum of these two values. Even if a server can handle more crawling, Google will not crawl beyond its demand. Conversely, high demand is throttled if the server responds slowly. Site owners can increase crawl budget by improving server performance and optimizing content quality.

Rendering JavaScript

Modern websites rely heavily on JavaScript to generate content dynamically. Google now routinely renders pages using headless Chromium before deciding what to index. The rendering workflow involves fetching the HTML, queuing the page for rendering, executing JavaScript, and then processing the rendered DOM alongside the original HTML.

Research by Vercel confirmed that 100% of HTML pages in their study resulted in full-page renders, including pages with complex JavaScript interactions, and that dynamically loaded API content was successfully indexed. While a rendering queue exists, its impact is less significant than previously thought — most pages are rendered within minutes rather than days. However, other search engines like Bing still struggle with JavaScript, making server-side rendering a best practice for maximum cross-engine visibility.

Is Your Site Being Crawled Effectively?

Our technical SEO audits uncover crawl issues, indexing gaps, and ranking opportunities your competitors are missing.

Stage 2: Indexing — Understanding and Storing Content

The Indexing Process

After a page is crawled and rendered, the search engine must understand what it contains. Indexing involves parsing the page's content — headings, body text, anchor text, images, structured data (JSON-LD), and metadata — then storing this information in a searchable database.

Google's indexing process includes several key operations:

- Parsing: Extracting headings, anchor text, structured data, and image alt attributes from the rendered HTML.

- Canonical selection: Identifying duplicate or near-duplicate pages, clustering them, and selecting one "canonical" version as the representative page for search results.

- Link graph merging: Consolidating internal and external links pointing to the canonical URL into a unified authority score.

- Signal collection: Gathering metadata such as the page's language, geographic relevance, and usability characteristics.

The Inverted Index

At the heart of search engine storage lies the inverted index, a data structure that maps terms to the documents containing them. Unlike a forward index (which lists terms per document), an inverted index reverses this relationship: it starts with a term and points to all documents where that term appears.

When a user submits a query, the engine retrieves the postings lists for each query term. For single-term queries, it returns the associated document list. For multi-term queries like "apple pie," it intersects the postings lists to find documents containing both terms. This structure enables the sub-second retrieval times users expect, even across hundreds of billions of pages.

Google's Caffeine Infrastructure

Google's indexing infrastructure, known as Caffeine, was introduced to handle the web's exponential growth. Unlike the previous layer-based model that updated only the main layer periodically, Caffeine uses incremental indexing — continuously crawling smaller portions of the web and updating the index in near real-time. Caffeine processes hundreds of thousands of pages in parallel every second, occupies approximately 100 million gigabytes of storage, and adds hundreds of thousands of gigabytes daily.

Distributed Architecture

Operating at global scale requires a distributed systems approach. Google's index is split using sharding (primarily document partitioning), where each shard contains a subset of the total dataset stored on specific nodes. Every query is sent to all shards simultaneously, with results aggregated centrally. Fault tolerance is achieved through massive replication — data is copied across multiple machines and data centers, with replicas taking over instantly if a server fails.

Common reasons include: low-quality or thin content, duplicate content (non-canonical pages), noindex meta directives, technical errors (4xx or 5xx responses), and manual penalties for violating webmaster guidelines. An SEO audit can identify exactly which pages are affected and why.

Stage 3: Ranking — Delivering Relevant Results

From Keywords to Intent

When a user types a query, the search engine does not re-crawl the web. Instead, it searches its pre-built index and applies ranking algorithms to determine which pages are the best match. Relevance is determined by hundreds of factors, including the user's location, language, device, and the nature of the query itself.

Modern ranking has evolved far beyond simple keyword matching. Search engines now analyze search intent — the underlying purpose behind a query:

| Intent Type | Description | Example |

|---|---|---|

| Informational | Seeking knowledge or answers | "How do solar panels work" |

| Navigational | Looking for a specific website | "YouTube login" |

| Commercial | Researching before a purchase | "Best laptops 2026" |

| Transactional | Ready to buy or take action | "Buy iPhone 17 Pro" |

The search engine assesses the query against these intent categories, then prioritizes pages whose content format and depth match the inferred intent. Understanding search intent is critical for any content marketing strategy — creating content that mismatches intent will underperform regardless of technical optimization.

Classical Relevance Scoring: TF-IDF and BM25

The foundational approach to relevance scoring is TF-IDF (Term Frequency–Inverse Document Frequency), which assigns higher importance to terms that appear frequently in a document but are rare across the entire corpus. This ensures that meaningful, specific terms carry more weight than common words like "the" or "is."

BM25 (Best Matching 25) is the evolution of TF-IDF and has become the default algorithm in major search platforms like Elasticsearch, Azure AI Search, and Apache Solr. BM25 improves upon TF-IDF in two critical ways:

- Term frequency saturation: Beyond a certain point, additional occurrences of a term yield diminishing returns, preventing keyword-stuffed documents from dominating results.

- Document length normalization: Adjusts scores based on document length, so shorter documents are not unfairly penalized or rewarded.

PageRank: The Link-Based Foundation

PageRank, named after Google co-founder Larry Page, was the foundational algorithm that distinguished Google from earlier search engines. It estimates a page's importance by analyzing the quality and quantity of links pointing to it. The core insight: a page linked to by many authoritative pages is likely itself authoritative.

The algorithm models a "random surfer" who navigates the web by randomly clicking links. Pages that this hypothetical surfer visits more frequently receive higher PageRank scores. While PageRank remains a component of Google's ranking system, its relative importance has diminished as hundreds of additional signals have been incorporated — which is why modern link building strategies focus on relevance and authority, not just volume.

AI-Powered Ranking: RankBrain, BERT, and MUM

The most transformative recent developments in search ranking involve artificial intelligence and natural language processing. Google's shift from keyword matching to AI-driven intent interpretation represents the largest transformation in search since its creation.

RankBrain (2015) is a machine learning system that helps Google interpret unfamiliar or ambiguous queries. It continuously learns from user behavior, promoting results that satisfy searchers and demoting those that do not.

BERT (Bidirectional Encoder Representations from Transformers, 2019) enabled Google to understand language bidirectionally — considering the entire sentence context rather than reading left to right. For example, for the query "can someone get a visa for their brother," BERT correctly identifies that the brother needs the visa, whereas previous systems might have been confused.

MUM (Multitask Unified Model, 2021) is reportedly 1,000 times more powerful than BERT. It is a multimodal model capable of understanding text, images, and video across 75 languages. MUM can synthesize information from multiple sources and formats to answer complex, nuanced questions — for instance, comparing hiking gear requirements between different terrains and seasons.

The shift to AI-powered ranking means search engines increasingly understand meaning, not just keywords. Optimizing for AI-driven search requires a fundamentally different approach — one that prioritizes entity authority, topical depth, and natural language. Learn how eMac Media's AI & Search Visibility services help brands adapt.

The Knowledge Graph and Entity Understanding

Google's Knowledge Graph is an entity database that stores facts and relationships about people, places, brands, and concepts. Rather than matching keywords, the Knowledge Graph identifies entities within a query and uses context to disambiguate them — distinguishing, for example, between "Apple" the company and "apple" the fruit.

Entity understanding powers features like Knowledge Panels, direct answers, and rich results. It enables Google to respond to direct questions by drawing on a structured web of relationships rather than simply scanning for keyword matches.

Modern Ranking Factors (2026)

While Google does not publish a definitive list of all ranking factors, the core elements widely recognized for 2026 include:

- Content quality and relevance: High-quality, original content that satisfies user intent remains the most important factor.

- Backlink quality and authority: Links from trusted, authoritative websites still serve as key credibility signals.

- Mobile-friendliness and Core Web Vitals: Page speed, interactivity, and visual stability directly influence rankings.

- E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness): Google evaluates whether content creators demonstrate genuine expertise and whether the information is trustworthy.

- Structured data (Schema markup): Helps search engines understand page content more precisely and enables rich results like featured snippets.

- HTTPS security: Encrypted connections are a confirmed ranking signal.

- User engagement signals: Metrics like click-through rates, dwell time, and pogo-sticking influence how results are adjusted over time.

Serving Results: The Final Step

When results are served, several additional processes occur:

- Query analysis: The engine examines language, intent, spelling corrections, location signals, and device type.

- Document retrieval: A pool of relevant documents is pulled from the index.

- Scoring and sorting: Ranking algorithms score and order the documents.

- SERP layout assembly: The engine decides which features to display — organic results, images, maps, videos, Knowledge Panels, AI Overviews, or other modules.

Google's serving layer now includes advanced features such as passage retrieval (surfacing a single paragraph from a long article for a specific long-tail query) and AI Overviews (generative summaries that appear above organic listings). The traditional "10 blue links" layout has given way to a rich, multi-format results page tailored to each query's intent.

Search engines have remained fundamentally stable in their three-stage architecture for over two decades, even as the underlying technologies have undergone radical transformation. Crawlers evolved from simple link-followers into JavaScript-rendering engines. Indexing scaled from centralized databases to globally distributed systems. And ranking shifted from keyword matching to AI-driven intent understanding. Understanding these fundamentals is essential for anyone building, optimizing, or studying the systems that organize the world's information.

Frequently Asked Questions

Ready to Dominate Search?

Now that you understand how search engines work, let our team put that knowledge to work for your business.

Get Your Free Strategy Proposal